Let $R$ be a symmetric relation on set $S$ of length $n$. Let $b \in S$ and let $\beta_b = \{a | b R a \wedge a \neq b\}$. Let $k(b)=|\beta_b|$ be the cardinality of $b$.

Theorem:

$\exists a,b \in S : k(a) = k(b)$

Proof:

Assume there doesn't exist such a and b. Then all our elements in $S$ has different cardinalities. We have $n$ elements so we need $n$ different cardinalities. Maximum cardinality possible is $n-1$. So our cardinalities range from $0$ to $n-1$. If there is element $y$ whose cardinality $n-1$ then $\exists_{n-1} a : y R a$, i.e. all other elements than $y$ has cardinality $\geq 1$. Hence there is no element with cardinality $0$. And then we don't have $n$ different cardinalities, contradiction. The theorem holds $\qed$

"Beneath this mask there is more than flesh. Beneath this mask there is an idea, Mr. Creedy, and ideas are bulletproof" - V for Vendetta

Monday, December 21, 2009

Sunday, December 6, 2009

Universal services

I have moved my favorite album sharing service from facebook to picasaweb.

Picasaweb has several advantages over facebook from my point of view. The most important one is the ability to store images with original quality. Auto-tagging is also a great relief, that you can tag faces of people much much faster. Also, for shared events all people can upload to one common album, unlike facebook where each one has his own album.

However, a question does arise here, what's the fate of my old pics on facebook ? How can I for example find all the tagged photos of some person ? That's a drawback.

The problem is much more general that it initially seems. The diversity of services is a good thing, both for competition and creativity and freedom of choice. However, some services are much much useful if aggregated among all your friends. Or at least if sharing of items is possible among different service providers, like calendar providers, image sharing, item recommendation.

Some solution has to be sought. And I conjecture that solving it is impossible because some unforeseen aggregation services might require user approval for privacy issues and then it can't be seamlessly done unless are friends in your network (and their network) are actively presented by the terms of the new value-added services of all different providers. That's impossible without centralized coordination and control over all humanity. It's just impossible.

So one solution to do so is to make the move you want in your local vicinity as much as you can. But it becomes tiring when lots of new services has shown and also when users tend to have keenness on their old service because of the effort need to be done to migrate all the data to the new service or at least the psychological effect of having to leave their old data (and sometimes for leaving the old familiar interface too).

So, by definition, multiple services existing together implies that users are distributed between them in some sort of a fair share. Adding support to unify and aggregate these services together requires at least some sort of unified identification per person. Which, although of existence of openID, is not always feasible for many people because they just don't like having single identifiable identity, or in other words "privacy".

Privacy became a concern in my eyes when I noticed that I almost can get enough information about a person through his public facebook data and his linked-in account to know his looks, and his friends/community and his educational/work background.

In the same sense having centralized identity with all the information can be a big privacy breach if the credentials is lost to some malicious individual/institution. Biometrics are not enough for it is not attainable by all people and even PKI infrastructure is not good enough for lack of central authority knowledge of identifying information of each individual on the face of earth, and for the (non-zero) possibility of private key loss !

Google tried to unify lots of services in its re-innovation of email, the so-called "google wave". It's still too early to judge it but I suppose that something too general can not be as good as something specific. For one simple reason, the additive complexity of adding more and more details to something over-generalized will eventually overhaul an individual because he might not be able to mentally organize all this information because to the mind it is still in one entity: google wave.

Even if google provides a universal communication service, it's still to be integrated into much more services. All blogs and all services with "commentable" contents has to support it to be really universal. However, even if that happened, the existence of provable trade-offs in human computer interaction for many factors including but not limited to: hardware costs, bandwidth limits, screen sizes, mobility, privacy, security, wide-spectrum usability, intuitive GUI, and nitty-gritty feature sets, makes it just infeasible to make one big universal communication service like google wave.

It might be solved with some sort of specialization, like google wave for the extreme-dull-mentallyRetarted-totallyUneducated-internetUnknowing-computerIlliterate-idiots, that is provided with totally different look and feel, and different set of simplified services. Then it can be feasible but the identification has to be different than "google wave", i.e. specialized, the opposite of general !

In conclusion, if you completed reading until this part then I know your name ;)

Picasaweb has several advantages over facebook from my point of view. The most important one is the ability to store images with original quality. Auto-tagging is also a great relief, that you can tag faces of people much much faster. Also, for shared events all people can upload to one common album, unlike facebook where each one has his own album.

However, a question does arise here, what's the fate of my old pics on facebook ? How can I for example find all the tagged photos of some person ? That's a drawback.

The problem is much more general that it initially seems. The diversity of services is a good thing, both for competition and creativity and freedom of choice. However, some services are much much useful if aggregated among all your friends. Or at least if sharing of items is possible among different service providers, like calendar providers, image sharing, item recommendation.

Some solution has to be sought. And I conjecture that solving it is impossible because some unforeseen aggregation services might require user approval for privacy issues and then it can't be seamlessly done unless are friends in your network (and their network) are actively presented by the terms of the new value-added services of all different providers. That's impossible without centralized coordination and control over all humanity. It's just impossible.

So one solution to do so is to make the move you want in your local vicinity as much as you can. But it becomes tiring when lots of new services has shown and also when users tend to have keenness on their old service because of the effort need to be done to migrate all the data to the new service or at least the psychological effect of having to leave their old data (and sometimes for leaving the old familiar interface too).

So, by definition, multiple services existing together implies that users are distributed between them in some sort of a fair share. Adding support to unify and aggregate these services together requires at least some sort of unified identification per person. Which, although of existence of openID, is not always feasible for many people because they just don't like having single identifiable identity, or in other words "privacy".

Privacy became a concern in my eyes when I noticed that I almost can get enough information about a person through his public facebook data and his linked-in account to know his looks, and his friends/community and his educational/work background.

In the same sense having centralized identity with all the information can be a big privacy breach if the credentials is lost to some malicious individual/institution. Biometrics are not enough for it is not attainable by all people and even PKI infrastructure is not good enough for lack of central authority knowledge of identifying information of each individual on the face of earth, and for the (non-zero) possibility of private key loss !

Google tried to unify lots of services in its re-innovation of email, the so-called "google wave". It's still too early to judge it but I suppose that something too general can not be as good as something specific. For one simple reason, the additive complexity of adding more and more details to something over-generalized will eventually overhaul an individual because he might not be able to mentally organize all this information because to the mind it is still in one entity: google wave.

Even if google provides a universal communication service, it's still to be integrated into much more services. All blogs and all services with "commentable" contents has to support it to be really universal. However, even if that happened, the existence of provable trade-offs in human computer interaction for many factors including but not limited to: hardware costs, bandwidth limits, screen sizes, mobility, privacy, security, wide-spectrum usability, intuitive GUI, and nitty-gritty feature sets, makes it just infeasible to make one big universal communication service like google wave.

It might be solved with some sort of specialization, like google wave for the extreme-dull-mentallyRetarted-totallyUneducated-internetUnknowing-computerIlliterate-idiots, that is provided with totally different look and feel, and different set of simplified services. Then it can be feasible but the identification has to be different than "google wave", i.e. specialized, the opposite of general !

In conclusion, if you completed reading until this part then I know your name ;)

Friday, October 30, 2009

On spacetime, theory of incompleness and the axiom of choice (and the wave-particle duality)

NOTE: No guarantee on the correctness of anything mentioned in this article. It's just impression on what I read and I might have got it wrong or haven't read enough.

"Past and future,..

relativity to the speed of light,..

light speed is constant to all observers, the difference is only in red/blue-shift."

1- Invalidity of using the terms "Past" and "Future" as dimensions because they are relativistic terms. We can say positive and negative because they are well defined in relation to zero. But past and future, by analogy is like saying positive and negative with regards to unknown variable x.

2- Assume there is a light wave moving in some direction A. And there are 2 observers. One moving in the same direction with speed I, and one moving in the other direction with speed K. Speed of light to both must have different signs. Also assume I=c, then the "relative" speed between the light wave and the first observer (let it be another light wave to justify it), would be either 0 or c). It should be 0 because otherwise this totally says that the speed of light does NOT obey the laws of algebra.

3- Red and blue shifts are confirmed by observations. But let's look closer at the cause. They say that it's blue if it's coming to you, and red if it's departing from you. If it's departing from you, then how you've got the light wave ? That means that light waves has no direction and they move in all directions at one with only difference in frequency. Imagine a light wave that consists of one photon, is that even possible !!

I don't mean to say that some of these results -which are confirmed with many experiments- are incorrect. I only mean that the explanation provided either needs a total rethinking or I need someone to explain it better to me.

The Godel's theory of incompleteness can be thought of having similarity to the liar's paradox. But however I don't think it's true. It says that given some formal axiomatic system, there is a statement that can not be proven (incomplete) or there is two contradictory statements that can be derived (inconsistency).

Well, incompleteness is relative to what is to be proven. There is no set of formal axioms that can prove everything. But the theory implies that no matter how much axioms we have we can not prove everything.

Ironically, this theory is unprovable ! However, I think that we should think of the domain of the field the axioms was set up for. The set of axioms are complete within the set of theories they prove. If you want to prove something else you changed your field and need axioms related to this field.

I discuss the axiom of choice as an example of that. Think of this result: axiom of choice is independent of ZFC ! Well, doesn't that reflect part of the insight of the previous paragraph ?

Think too that axiom of choice is needed in case your set has subsets which are actually intervals in the Real domain. I am not sure if anyone thought of this, or I am too ignorant or too lazy to read and search for it, but doesn't this signal the end of the domain of "discrete" sets into "continuous" sets ? There is a difference between infinite sets and continuous sets -in my humble/ignorant opinion. However the axiom of choice seems to me more like of a hack to get the -completely in other domain- set of ZFC axioms to work with continuous sets !

Back to the wave-particle duality of photons and waves. One experiment that make me think about it is the refraction experiment where you send a ray of light in a hole whose diameter is less than the wavelength, and you'll get refraction. They claim that if you sent photon by photon instead you'll get the same refractive pattern and the claim is that this confirms the wave-particle duality. However did anyone consider the fact that it came from incapability to orient the photon with this wavelength to be in the same position when in hits the hole ?

Back to quantum randomness theory, that everything in quantum world is random. However I do not think it's random. Seeming random doesn't mean it's random. And randomness -no matter how low- can not build such a bigger coherent system. There is rules and not being able to know the rules made some of us try to interpret it by randomness, shame on you, lazy guys. I agree with Einstein who said that "God does not play dice".

Please if anyone has any insights or corrections he is welcome to comment here for a discussion. Thank you for reading so far and I hope I could deliver my points.

"Past and future,..

relativity to the speed of light,..

light speed is constant to all observers, the difference is only in red/blue-shift."

1- Invalidity of using the terms "Past" and "Future" as dimensions because they are relativistic terms. We can say positive and negative because they are well defined in relation to zero. But past and future, by analogy is like saying positive and negative with regards to unknown variable x.

2- Assume there is a light wave moving in some direction A. And there are 2 observers. One moving in the same direction with speed I, and one moving in the other direction with speed K. Speed of light to both must have different signs. Also assume I=c, then the "relative" speed between the light wave and the first observer (let it be another light wave to justify it), would be either 0 or c). It should be 0 because otherwise this totally says that the speed of light does NOT obey the laws of algebra.

3- Red and blue shifts are confirmed by observations. But let's look closer at the cause. They say that it's blue if it's coming to you, and red if it's departing from you. If it's departing from you, then how you've got the light wave ? That means that light waves has no direction and they move in all directions at one with only difference in frequency. Imagine a light wave that consists of one photon, is that even possible !!

I don't mean to say that some of these results -which are confirmed with many experiments- are incorrect. I only mean that the explanation provided either needs a total rethinking or I need someone to explain it better to me.

The Godel's theory of incompleteness can be thought of having similarity to the liar's paradox. But however I don't think it's true. It says that given some formal axiomatic system, there is a statement that can not be proven (incomplete) or there is two contradictory statements that can be derived (inconsistency).

Well, incompleteness is relative to what is to be proven. There is no set of formal axioms that can prove everything. But the theory implies that no matter how much axioms we have we can not prove everything.

Ironically, this theory is unprovable ! However, I think that we should think of the domain of the field the axioms was set up for. The set of axioms are complete within the set of theories they prove. If you want to prove something else you changed your field and need axioms related to this field.

I discuss the axiom of choice as an example of that. Think of this result: axiom of choice is independent of ZFC ! Well, doesn't that reflect part of the insight of the previous paragraph ?

Think too that axiom of choice is needed in case your set has subsets which are actually intervals in the Real domain. I am not sure if anyone thought of this, or I am too ignorant or too lazy to read and search for it, but doesn't this signal the end of the domain of "discrete" sets into "continuous" sets ? There is a difference between infinite sets and continuous sets -in my humble/ignorant opinion. However the axiom of choice seems to me more like of a hack to get the -completely in other domain- set of ZFC axioms to work with continuous sets !

Back to the wave-particle duality of photons and waves. One experiment that make me think about it is the refraction experiment where you send a ray of light in a hole whose diameter is less than the wavelength, and you'll get refraction. They claim that if you sent photon by photon instead you'll get the same refractive pattern and the claim is that this confirms the wave-particle duality. However did anyone consider the fact that it came from incapability to orient the photon with this wavelength to be in the same position when in hits the hole ?

Back to quantum randomness theory, that everything in quantum world is random. However I do not think it's random. Seeming random doesn't mean it's random. And randomness -no matter how low- can not build such a bigger coherent system. There is rules and not being able to know the rules made some of us try to interpret it by randomness, shame on you, lazy guys. I agree with Einstein who said that "God does not play dice".

Please if anyone has any insights or corrections he is welcome to comment here for a discussion. Thank you for reading so far and I hope I could deliver my points.

Wednesday, May 20, 2009

Saturday, April 25, 2009

Friday, April 3, 2009

Wednesday, March 11, 2009

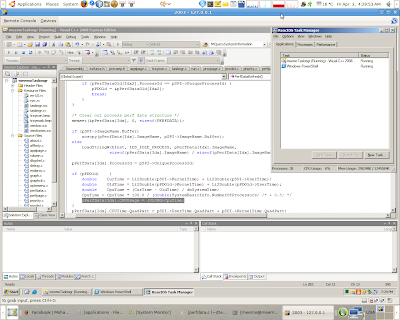

3 years in 40 lines of code; ArabOS page allocator finally arrived al 7amd llah !!

Subscribe to:

Comments (Atom)